“Does it scale?” This is one of the most common questions we get after one figures out how Vaadin works. Vaadin runs all UI logic on the server-side and user interaction in the client-side UI results in a large number of request-response round trips to the server. Clearly that cannot scale, right?

We took this claim as a challenge and wanted to prove it wrong. For this purpose we built a fictional movie ticket box office as a Vaadin web application. And we wanted to think big. The application sells tickets to theaters all over the world and must be able handle thousands of concurrent users making their purchases at the same time.

From an architectural point of view, the application uses MySQL as the underlying database, EhCache and Memcached for keeping data in-memory for efficient access, Vaadin as the UI layer and Spring 3 for wiring it all up. The build process is automated with Ant using Ivy to manage dependencies. The full source code is available in our SVN repository.

To simulate thousands of concurrent users we decided to use Apache JMeter. We recorded a test script that runs for about 2.5 minutes and purchases two tickets. Running JMeter to simulate large amount of users requires quite an amount of CPU and memory, so we needed some serious hardware. That’s why we decided to do the whole testing process in the Amazon Web Services (AWS) cloud.

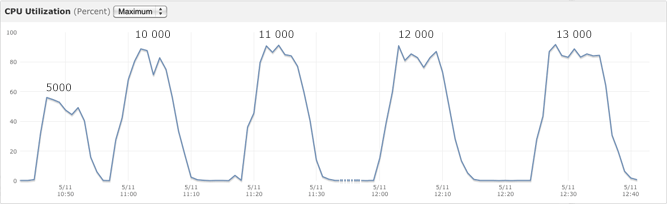

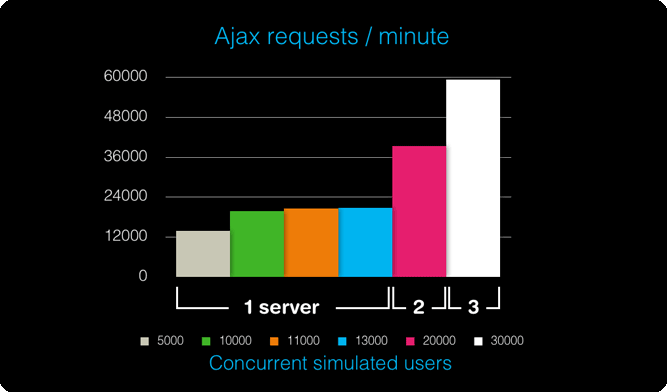

And now to the part you’ve all been waiting for, the results. First we ran the test script against a single EC2 large instance running the QuickTickets application in a Tomcat server (with Amazon RDS as the MySQL server) gradually increasing the thread count. The single server could handle 20 622 AJAX requests / minute (translating into 2748 purchase scenarios / minute by 11 000 concurrent simulated users) before exceeding 1% of errors (rejecting connections). See the graph below for maximum CPU utilization during the tests. To put that number into perspective, the actual real world movie ticket sales of 2009 were 1.4 billion tickets in US and Canada (source: MPAA - 2009 Theatrical Market Statistics). That's around 2600 sold tickets / minute, which translates to some 1300 purchase scenarios per minute assuming two tickets per transaction. So basically a single server could easily handle the load of selling all tickets for US and Canada!

As if that wasn't enought, we decided to try out how much more tickets we could sell with a setup consisting of more than one EC2 instance. By setting up first two and then three servers, we could easily see that the application scales out really nicely. With running two servers we could get to 5313 purchase scenarios / minute. This two server setup could handle all the movie ticket sales in the world. Adding yet another server brought us up to 7990 purhcase scenarios / minute.

If you want to read how the testing was done in detail, see the Vaadin Scalability Testing with Amazon Web Services article. It provides all that is needed to reproduce the QuickTickets tests in the Amazon cloud environment and works as a good starting point for testing the scalability of your own applications.